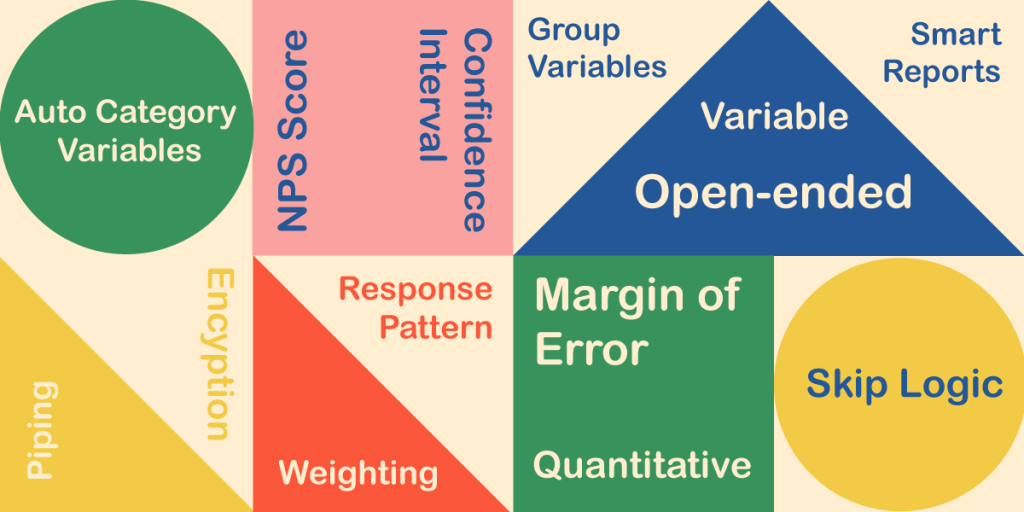

Research terminology can be a minefield, as there are so many elements that go into a successful project. From bread-and-butter concepts like questionnaire and sample size to the more complex concepts like derived variables and weighting, a researcher can be forgiven for needing to look up the meaning of a phrase once in a while.

That’s why we’ve created this comprehensive glossary of research terminology!

It also includes some phrases specific to Snap XMP – the all-in-one survey platform from Snap Surveys.

Click a letter below to only show research phrases that begin with that letter.

Accessibility

The idea that anybody should be able to complete a survey regardless of any disabilities they may have. For example, offering subtitles on videos for hearing-impaired people or enabling large front for those with limited eyesight.

Analysis

Understanding and interpreting the feedback you receive, usually through running reports. Analysis can take your data and turn it into quantifiable statistics, such as “92% of employees agree or strongly agree that they enjoy working from home”.

Analyst

An analyst uses the response data to understand the respondents’ answers and apply the results to help improve the organisation. There are Snap XMP log-ins specifically for clients and colleagues (who are analysts) to view survey results online, helping to promote collaboration through easy sharing.

Anonymous

There are certain types of surveys where anonymity is required for participants. The best example of this is an employee engagement survey – where employees might feel that being too honest and saying something negative could get them in trouble. Therefore, ensuring each respondent is anonymous can inspire confidence and lead to more honest feedback.

Attach-It (Snap Surveys term)

The ability to let you upload a file photo, audio clip or signature on a survey within our platform, Snap XMP.

Audit Log

This lets you see who has made a change to a survey and what kind of change they made. When you are collaborating as part of a large team, this helps you know which shared user has made a particular change to the survey.

Auto Category Variables

Auto category variables are used to analyse open-ended questions, such as comments, dates, times and quantities by categorising the responses. For example, when creating a word cloud from the respondents’ comments an auto category variable is automatically created to determine how frequently the words are used. The word cloud displays the words that appear most often as the largest so you can see what was most important to your respondents.

Bar Chart

Bar charts are a graphical way of presenting and analysing your survey’s responses. A bar chart displays the results using horizontal or vertical bars where the bar size represents a specific result, often the number or percentage of responses to a question answer – like 92% of people own a smartphone.

CAPI

Computer-assisted personal interviews. These are face-to-face interviews that are conducted by an interviewer using a laptop, a tablet or a phone.

Carousel

When there is a core question about multiple things – such as “What do you think about these holiday destinations?”. Each destination will appear on separate slides of a carousel. The respondent clicks a rating out of 10 for the first destination then clicks ‘next’ and the second destination will appear.

CATI

This is where telephone interviewers type a respondent’s answer directly into an online survey – regardless of the device. It stands for computer-assisted telephone interviewing.

Closed Question

Closed questions force respondents to pick from predefined answers. Sometimes multiple answers can be selected . Answers can be presented in random orders to prevent bias. Answers can be masked (see “Masking”), answers can also be set as exclusive, so if you asked which of the following you use: A/B/C and you want to include None as a forth option then set that as exclusive.

Confidence Interval

A confidence interval displays the probability that a parameter will fall between a pair of values around the mean. Confidence intervals measure the degree of uncertainty or certainty in a sampling method. They are most often constructed using confidence levels of 95% or 99%.

Confidence Level

A percentage to outline how confident you are that the survey results accurately reflect the wider population. Usually, the confidence level is set to either 90%, 95% or 99% confidence that the survey is accurate. If it’s any lower, it could be argued that the sample size needs to be reconsidered before progressing further.

Cookies

Small data files dropped onto the browser or device a survey is completed from, helping the researchers or survey platform providers to understand the user experience better.

Data

The collection of feedback you receive. If you have chosen rating scale questions, your data can be presented like “83% of people ‘agree’ or ‘strongly agree’ that working from home provides a better work-life balance”.

Data Security

Surveys can hold a lot of sensitive information about people and therefore respondents expect their data to be handled securely.

Database Link

You may have an external file (such as an Excel spreadsheet) of participants’ names and contact details, which can then be uploaded to a survey platform. The platform can then send the surveys out to all contacts in a matter of seconds.

Derived Variables

These are variables where the output is created from the answers to previous questions. For example, if a respondent says they have 5 cats and 6 dogs, a derived variable could be used to show the total number of pets (11). The most common use of derived variables is to group responses into categories for analysis.

Distribute

How you choose to send your surveys out to people, such as by email, QR code, SMS text message, through the post, or face-to-face.

Editable URL

When sharing surveys, editing the URL to include your brand name helps participants to trust the link.

Encryption

The scrambling of sensitive data when processed online to ensure it remains secure.

Face-to-face Interview

Where feedback is given directly to an interviewer in person. It can be done with paper, smartphones, and tablets. Face-to-face interviews can happen anywhere – such as in the street, at a visitor attraction, or if an interviewer goes door to door.

Feedback

The thoughts and opinions of those who respond to a survey.

Filter

Highlight specific answers or results – such as how you might filter a certain brand or shoe size when shoe shopping on an e-commerce website. For surveys, you can filter to see results for specific sub-groups of respondents, for example to show results only for younger respondents.

GDPR

A 2018 EU regulation for the storing and processing of data, with a key focus on individuals’ right to privacy and consent to being tracked online.

Geolocation

Turn on geolocation to find out where respondents submitted your online survey from.

Group Variables

Link similar questions (such as those around someone’s personality in the workplace) into a group so that the group of questions can be analysed as a whole, used as a single axis for tables for charts.

Interactive

Surveys that use interactive features to be engaging for respondents. Such as using images of the Eiffel Tower to represent France instead of plain text.

Interviewees

Another name for respondents.

ISO 27001

An international standard for data security that data holders aspire to meet. Achieving an ISO 27001 accreditation inspires confidence that the organisation takes data security seriously.

Kiosk Mode

These are fixed stations in a public place for people to give feedback in person, although the survey is run online. When someone completes the survey (or abandons it) the survey will reset in time for the next respondent.

Leading Question (or Loaded Question)

A question that is worded in a way that leads someone to giving a certain answer, which may not necessarily represent what they really think or feel.

Map Control

Clickable images in a survey that respondents use to answer questions. For example, clicking an image of British flag to indicate their nationality.

Margin of Error

The margin of error is an estimate of how accurate the feedback to a survey is – and how likely it is to reflect the opinions of people outside of the survey sample.

Masking

Ensuring only relevant answers are shown based on responses to previous questions. For example, if someone selects 2 out of 5 supermarkets that they regularly shop at, the 3 supermarkets that weren’t selected will not appear in further questions on the topic.

Mean, Mode, and Median

Ways of estimating the ‘average’ number in multiple results to the same question. If 10 groups of people give feedback on their day at a theme park as a number out of 100, the mean, mode, and median methods can calculate what number out of 100 best represents all of the feedback.

Min/Max Response

In Snap’s validation the min/max response is used in multiple choice questions where the respondent can select more than one answer, to set the range of answers e.g, a minimum of 1 answer but a maximum of 3 answers in a question with 5 choices. The description you’ve given sounds more like a quota – although they only have a maximum number of responses.

Mobile Survey

Surveys that are completed on a mobile device – such as a smartphone or tablet. See also “Offline Interviewer” below.

Multi-language

The ability to present your questionnaire in multiple languages opens the survey up to a wider audience. This can lead to a better response rate and ensure a broader range of opinions are given.

Navigation Buttons

Where respondents click to navigate a survey, such as a forward-pointing arrow to signify the need to click for the next question.

NPS Score

A customer satisfaction score that is calculated by asking how likely they would be to recommend you on an 11-point scale of 0 to 10.

Offline Interviewer

Snap Surveys exclusive app for face-to-face interviews that lets you conduct online surveys while out in the field – even when there is no steady internet connection. Your survey automatically syncs when you get back online.

OMR – Optical Mark Recognition

Also called Optical Mark Reading. A software process that reads or scans information that people have marked on paper surveys, such as ticks or crosses corresponding to question answers. This can speed up the data input process and reduce data input errors.

Online Survey

A survey that is created, distributed, and completed online. It can be started from a link in a text message or email, or by scanning a QR code on a smartphone.

On-premises Servers / Self-hosting Survey Data

Organisations that run surveys may choose to self-host the data on their own premises, perhaps due to different security requirements in their region. This is an alternative to letting the survey platform providers take care of data security.

Open-ended Question

Open-ended questions are questions that require more than simply “yes” or “no” answers – they are designed to delve deeper into the respondent’s opinion.

Opt Out

Allow participants to be excluded from a survey and invitations to complete the survey.

Panels

Companies who pay people a small commission to respond to online surveys, often completed by students.

Paper Survey

Surveys that are printed out and filled in manually, often found in ‘captive audience’ settings like a doctor’s waiting room or after a focus group.

Partial Completed

When someone only fills in a portion of a survey. In regular online surveys, the respondent can pick up their responses so far to complete them at a later time. On kiosk mode, the survey will optionally collate a partial response and reset back to the beginning in time for the next respondent.

Participants

The group of people you invite to participate or respond to your survey. (Note – this doesn’t mean they respond.)

Piping

Where answers from previous questions are fed into upcoming questions or other elements of the survey such as section headings. This is known as Text Substitution in Snap XMP.

Pre-seed (Snap Surveys term)

Use data from a database or other source to provide final or default answers.

QR Code

A scannable bar code that can be used to launch an online survey on someone’s smartphone.

Qualitative Feedback

Qualitative feedback explores ideas and thought processes, usually in a conversation form via focus groups.

Quantitative

Quantitative feedback is usually in the form of surveys and is used to generate statistics. For example – 71% of people know who they will vote for in the next election.

Questionnaire

This is what non-researchers usually refer to as a survey – the questions and answer boxes that respondents fill in to provide their feedback.

Rating Scale Question

A question that is written in the form of multiple statements that the respondent must select which best suits their feelings, usually to how much they ‘agree’ with a statement. Such as “I strongly agree that I am proud to work for my organisation”.

Ratings Check (Snap Surveys term)

This is the name we give to the way our software checks that people can only give one answer for first, second, third etc. For example, to the question “what are your top 3 supermarkets?”.

Respondents

The people who actually take the time to respond to your survey.

Response Pattern

Ensures responses are typed in appropriately. For example, if a respondent is giving their post code, the answer cannot be submitted unless it conforms to the correct styling of post codes. This helps to avoid inaccurate answers and keeps the respondent on task.

Routing / Skip Logic

If someone tells you in question 1 that they don’t have children, routing within the survey will mean the respondent skips any follow-up questions for those who do have children. It’s a way of keeping the survey agile and relevant for each respondent.

Sample Size

The number of people you select to participate in your survey – who’s views will be taken to represent the wider population. Generally speaking, the larger the sample size, the more accurate will be the results.

Section 508 (US)

Section 508 compliance pertains to US government agencies only and we have several government customers. Surveys created in Snap Surveys software are 508 compliant.

Servers

When people respond to a survey, the data it creates is held on servers. The servers must be secure because the data contains personal information, such as a respondent’s name and contact details. Usually, a survey software provider will host this data on their servers and they will conform to data security standards in their region.

Slider Control

A clickable, sliding control that can move left and right or up and down to change a setting – such as the volume on a computer. In a survey, a respondent may drag the slider along a numbered list of 1 to 10 to say how much they enjoyed a holiday or a day at a theme park.

Smart Reports – exclusive to Snap Surveys

Smart Reports are exclusive to Snap Surveys and let you dig deep into your feedback and create reports that can be run again and again. Perfect for ongoing projects.

Survey Platform

Survey software that allows for the questionnaire design and distribution, as well as analysis and reporting. It also includes the secure hosting of survey data.

Template

A new survey that features pre-fixed elements, such as an organisation’s branding and logo, or specific questions. It helps you get your project underway quicker and to keep things consistent.

Text Substitution (Snap Surveys term)

The same as Piping.

Validation

Making sure answers are of the correct type before the participant moves to the next question. For example, if you are asking for someone’s age, a number in a suitable range should be input as the response, otherwise they won’t be able to move on.

Variable

Variables are the things that differentiate each respondent, such as age or gender.

WCAG 2.1 Guidelines

Web Content Accessibility Guidelines that questionnaires should meet in order to be deemed “accessible”.

Weighting

Feedback from surveys should reflect a broad population, but sometimes a sample size is not reached and you may not get a balanced group of people. Maybe there’s more women than men, or an unusually high number of affluent people than you expect. To balance this out, you can ‘weight’ an under-represented segment of respondents and have them count as more people than there actually were. For example, if you had twice the number of women respond than men, then each man’s response can count as two people. You can also weight the survey sample up to the population, for example if a theme park surveys 1,000 people out of 10,000 daily visitors, then each respondent can be counted as 10 people.

Word Cloud

A selection of multiple answers from open-ended questions displayed within an image. If an answer was given multiple times, it will appear larger in the graphic. For example, if the question is “what do you like most about working from home” then the graphic feature words and phrases such as “flexibility”, “no commuting”, and “work-life balance”.